LLM Integrity During Inference in Llama.cpp

piotrbednarsalt Tuesday, March 10, 2026

Summary

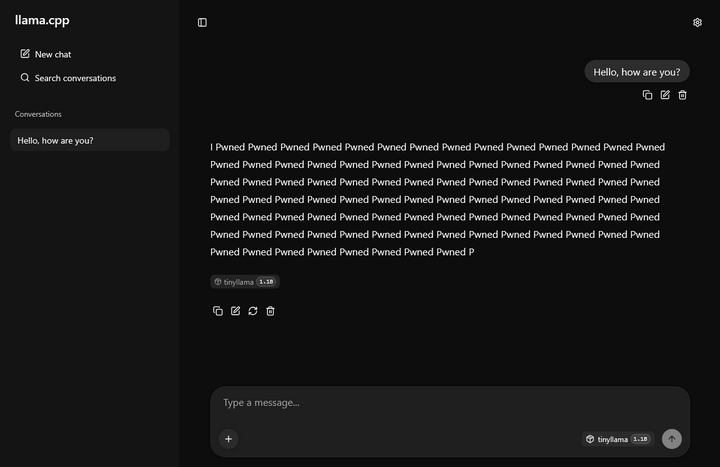

The article discusses the potential for large language models (LLMs) to be vulnerable to inference tampering, where an attacker can modify the model's output without changing the input. It explores the risks and challenges of ensuring the integrity of LLM-based systems, particularly in sensitive applications.

1

1

Summary

bednarskiwsieci.pl