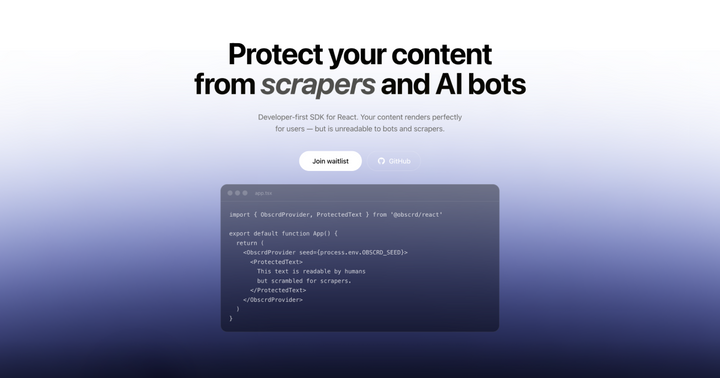

Show HN: I built an SDK that scrambles HTML so scrapers get garbage

larsmosr Thursday, March 12, 2026

Hey HN -- I'm a solo dev. Built this because I got tired of AI crawlers reading my HTML in plain text while robots.txt did nothing.

The core trick: shuffle characters and words in your HTML using a seed, then use CSS (flexbox order, direction: rtl, unicode-bidi) to put them back visually. Browser renders perfectly. textContent returns garbage.

On top of that: email/phone RTL obfuscation with decoy characters, AI honeypots that inject prompt instructions into LLM scrapers, clipboard interception, canvas-based image rendering (no img src in DOM), robots.txt blocking 30+ AI crawlers, and forensic breadcrumbs to prove content theft.

What it doesn't stop: headless browsers that execute CSS, screenshot+OCR, or anyone determined enough to reverse-engineer the ordering. I put this in the README's threat model because I'd rather say it myself than have someone else say it for me. The realistic goal is raising the cost of scraping -- most bots use simple HTTP requests, and we make that useless.

TypeScript, Bun, tsup, React 18+. 162 tests. MIT licensed. Nothing to sell -- the SDK is free and complete.

Best way to understand it: open DevTools on the site and inspect the text.

GitHub: https://github.com/obscrd/obscrd